AI Governance Regulations in the US: What You Need to Know

Jadon Montero

//

March 4, 2026

Jadon Montero

//

March 4, 2026

A guide to the state laws currently shaping AI governance and what it takes to actually comply, plus why this matters way beyond fines.

The Big Idea

- The federal government has largely stepped back from AI regulation, so states are filling the void fast.

- Colorado's AI Act (SB 205, a.k.a. CAIA) is the most comprehensive U.S. AI law to date, and it goes into effect June 30, 2026.

- Every compliance requirement in CAIA assumes you have complete visibility into your AI systems. Most organizations don't.

- NIST AI RMF alignment is emerging as the safe harbor mechanism across state laws, much like the NIST Cybersecurity Framework has for data security. (ISO 42001 functions similarly, and we explain the differences here.)

- Having an AI policy isn't the same as being able to enforce one. New technological capabilities are needed to meet these requirements.

- Meeting legal and compliance requirements as a business is important; empowering and protecting real humans is the most important.

A new technology hits the market. We need new laws to govern it. The federal government drags its feet, so states move first. Businesses scramble to keep up with a patchwork of overlapping state requirements while waiting for unified guidance that may never come.

Stop us if you've heard this one before.

Anyone who observed how data privacy law evolved in the U.S. over the last decade or so will find the current AI regulatory moment eerily familiar. We still have no federal omnibus privacy law, just a growing pile of bandaids: HITECH, TCPA, COPPA. To fill the void, state frameworks became the de facto standard for U.S. companies, often alongside international ones like GDPR (by necessity or default).

The same pattern is playing out with AI, only faster. Federally, it's like watching a game of ping-pong: Volley: The Biden administration's 2023 Executive Order establishes a framework around safety and civil rights for AI.

Return: The Trump administration's January 2025 order on AI repeals key provisions in favor of lighter-touch oversight.

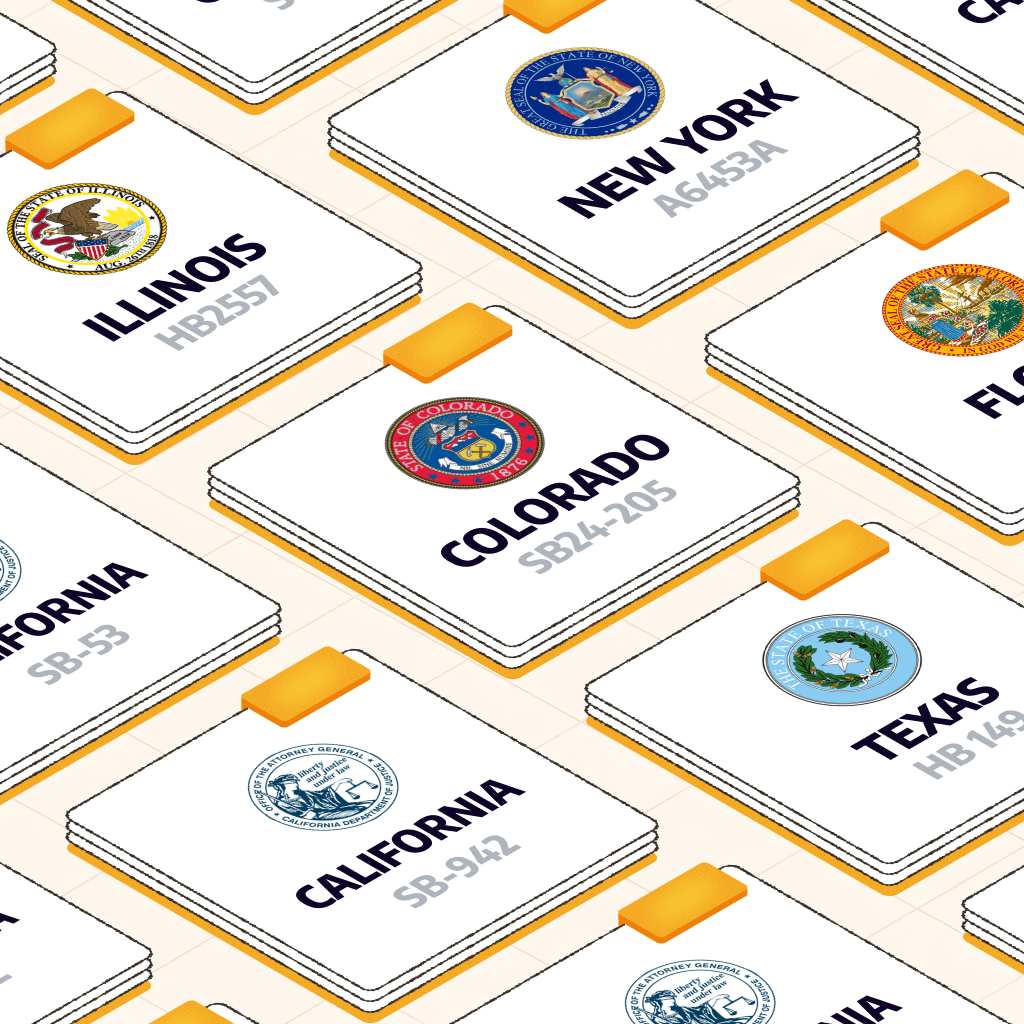

States aren't waiting to see how the game plays out. Colorado, California, Texas, and Illinois have all confidently served up private-sector AI governance regulations. Many more are warming up on the sidelines.

Meanwhile, a company with employees in Denver, customers in California, and operations in Texas may already be subject to three overlapping regulatory regimes.

And Colorado's law goes into effect June 30, 2026. The odds of comprehensive federal legislation arriving before then are slim.

So if your organization uses AI to make decisions that affect people across hiring, lending, healthcare, insurance, education, or housing, it's time to act.

This guide covers which laws apply, what they actually require, and where most organizations stand today.

The Laws You Need to Know

Colorado's CAIA: The Most Comprehensive U.S. AI Law to Date

Colorado's AI Act (SB 24-205 or CAIA), effective June 30, 2026, is the first comprehensive state law in the U.S. to regulate how AI systems are deployed in the private sector. Other states are watching it closely because it sets a higher bar than any current U.S. AI law.

Who CAIA affects

The law applies to any organization deploying "high-risk AI," which means systems making or substantially influencing consequential decisions affecting Colorado residents.

"Consequential" covers a lot of ground: employment, housing, healthcare, financial services, education, insurance, legal services, and government services all qualify.

Geographic reach matters here, too, as when California's privacy laws rolled out. CAIA doesn't require your company to be headquartered in Colorado. It applies to anyone doing business in the state that deploys a qualifying AI system. In practice, that means most companies operating anywhere in the U.S. need to pay attention.

What CAIA Requires (and of Whom)

For deployers (companies deploying AI systems), compliance means:

- A documented risk management program aligned with NIST AI RMF or ISO 42001

- Impact assessments completed before deployment, annually, and within 90 days of any substantial modification

- Consumer disclosures and meaningful appeal rights for adverse decisions

- Ongoing monitoring for algorithmic discrimination

You might be wondering what qualifies as “deploying” and who is considered a “deployer” under this law. It's a great question.

CAIA distinguishes between developers (those building AI systems) and deployers (those using them to make decisions).

"Deployer" covers more ground than it might seem at first. If your organization uses an AI system to make consequential decisions, you're a deployer under the law, whether you built that system in-house or licensed it from a vendor. That third-party hiring tool, loan decisioning platform, or AI-powered insurance underwriting system? All yours to govern.

Developers of AI tools are not responsible for carrying out impact assessments or running risk management programs under this law. They are required to give their customers (deployers) what they need to ensure compliance. This includes documentation like model cards and known risk disclosures to help deployers complete their impact assessments.

AI technology vendor contracts should reflect this. Make sure your AI vendors keep you informed about how their systems work, what risks they've identified, and if they discover discrimination issues after deployment.

The Penalty Math

We're not big on FUD, but CAIA isn't a law to trifle with. Violations are treated as unfair trade practices, with fines of up to $20,000 per violation and no maximum cap. Following Colorado Consumer Protection Act precedent, violations will be counted per affected consumer.

Let's do some quick math: A failure to notify 1,000 consumers of an adverse AI decision could mean up to $20 million in exposure. (And, as we know from past data incidents, that's a drop in the bucket.)

To sharpen the point, Colorado originally had a penalty cap of $500,000 but removed it in 2019, which means there's no ceiling for fines. There's a 60-day cure window after the Attorney General provides notice, but penalties start stacking once that window closes. It can add up fast.

The Safe Harbor

Thankfully, Colorado built a meaningful on-ramp into the law. Companies that align their AI governance programs with NIST AI RMF or ISO/IEC 42001 receive a rebuttable presumption of "reasonable care." That's real legal protection, and we cover why it matters across all the state laws, not just Colorado's here.

California: Multiple Laws, Multiple Timelines

California's also moving fast here. This isn't surprising; as with CCPA (now CPRA), the home of Silicon Valley tends to be out front on technology-centric legislation.

That said, so far California's taking a different approach vs. Colorado. Rather than one comprehensive act, CA has so far enacted several targeted laws that together create substantial (but achievable) obligations.

Senate Bill 942 (AI Transparency Act), Senate Bill 53, and Assembly Bill 2013 all took effect January 1, 2026. Together they require AI-generated content disclosure and watermarking, safety and security protocols for frontier model developers, and public disclosure of training data used in generative AI systems.

SB 53 also encourages NIST AI RMF alignment and appears to offer safe harbor for organizations that can demonstrate compliance.

California's Automated Decision-Making Technology (ADMT) AI governance regulations are also advancing, with enforcement expected in 2027. Those regulations would require notice before using AI for consequential decisions (another key difference vs. CAIA) and opt-out rights for consumers. For example, if you're a life insurance company using an AI tool to decide what plans to offer your users based on information about them, you would need to disclose the use of AI prior to putting the tool into action for your business.

Though not quite as aggressive as Colorado, this is another doozy in the monetary consequences department. Plus California makes a distinction here focused on intent: Violations under ADMT carry fines of up to $2,500 per unintentional violation and $7,500 per intentional violation.

It's worth noting that intent can be very hard to parse and prove without the right technical systems in place. We know this from direct experience building and operating in this space (and you probably know, too, from dealing with insider threats).

This is a big part of why we built Maro, which functions as a cognitive security agent, silently observing intent, context, and behavioral cues. Proving intent will be crucial to meeting state regs and minimizing potential liability. (And it's key for determining how to respond to a violation internally also. There's a big difference between someone using AI to steal data on purpose and an accident that can be quickly corrected with a well-timed reminder.)

Texas: The Responsible AI Governance Act

Texas's Responsible AI Governance Act (TRAIGA, HB 149), effective January 1, 2026, focuses on prohibiting specific harmful AI practices across both public and private sectors, particularly intentional discrimination (noticing a trend?)

It's a narrower regulation than Colorado's, but it also includes one significant feature: an affirmative defense for companies aligned with the NIST AI Risk Management Framework.

NIST alignment is explicitly a safe harbor under Texas law.

Insight

The NIST safe harbor pattern is going to keep coming up. (And honestly, we're here for it.)

Illinois: AI in Employment

Illinois has been regulating AI in employment longer than most states. Its Artificial Intelligence Video Interview Act has been in effect since 2020, requiring employers to notify candidates when AI is used to evaluate video interviews, obtain consent, and limit how that footage is shared. We simply love to see it.

It's obviously much narrower in scope than Colorado's law, but it set an early precedent for AI accountability in hiring. It also established Illinois as a state willing to legislate AI before it was a mainstream policy conversation. Go Prairie State!

The Rest of the Map

The state-level activity goes well beyond the headliners. The volume of legislation in progress is jaw-dropping (in a good way).

New York's Responsible AI Safety and Education Act (RAISE) is advancing through the legislature with provisions that closely mirror Colorado's risk-based approach. (Again, unsurprising, as NY often leads the pack on legislation like this.)

Utah enacted its AI Policy Act in 2024, becoming one of the first states to require disclosure when consumers interact with AI. Several other states are working through active AI governance regulations right now.

Florida has proposed new AI legislation that aims to protect consumers with an “Artificial Intelligence Bill of Rights,” (CS/SB 482) and another (SB 484) to protect citizens from the financial and resource implications of hyperscale data centers (which, as you know, are required to deploy AI). The proposal is wide-ranging, including protections against “deep fakes”, misuse of personal information, insurer usage of AI in claims, and more. It also includes data security and privacy provisions, as well as increased parental control requirements. The Data Centers proposal is intended to protect Floridians from paying for hyperscale data centers via utility bills, remove tax subsidies for large tech companies, and protect Florida's natural resources. The components seem to be somewhat disjointed but broad, so it's worth keeping an eye on this.

Need to stay up to date on state regs? We'll keep posting about AI governance regulations here and on LinkedIn. You may also want to keep an eye on IAAP. The IAPP's U.S. State AI Governance Legislation Tracker monitors this activity across the country. Their chart tracking regulations can help you visualize overlap and trends.

AI Governance and Guidance, Delivered

Want updates on AI governance regulations and other need-to-know information about this rapidly-evolving space delivered right to your inbox? Sign up for AI Risk, Actually, a biweekly newsletter below.

Throughlines

The trends here are consistent, with states:

- Modeling new laws on Colorado's framework

- Incorporating NIST alignment as a safe harbor

- Focusing on consequential decisions affecting: employment, housing, healthcare, and financial services

Colorado sets a great example by focusing on the areas where AI has the most potential to do harm if not carefully monitored and regulated.

Federal preemption (i.e., passing laws that override or invalidate state laws) may eventually simplify this picture. It hasn't yet, though, and if we're going by track record, “wait and see” isn't the move here.

Bottom line

Any organization using AI to make decisions that affect people in material ways should assume the regulatory environment will keep expanding. It's time to move proactively to meet requirements.

Pitfalls You'll Want to Avoid

Blocking Isn't a Solution

There's always a temptation when new regulations start to emerge or risks of a new technology become obvious to simply tell employees, “No.” But, as in so many other contexts, abstinence-only education is not a solution. Forbidding AI usage will only drive it underground, making it even harder to monitor usage, prevent misuse, and ensure compliance.

Visibility Comes Before Governance

Most organizations using AI today aren't yet in compliance with Colorado's requirements. Many (understandably) don't have a clear picture of what they need to do to get there.

Our goal here is to help make this picture clearer.

Every compliance requirement under CAIA assumes you have complete visibility into your AI systems and usage. They assume you know exactly what AI is being used, how, by whom, when, and for what purposes. That baseline assumption is baked into requirements around impact assessments, risk management programs, discrimination monitoring, and consumer disclosures.

But we all know new technologies, especially game-changers for employee productivity, are often adopted faster than IT can create guardrails and policies. AI might be breaking the mold in many ways, but it's part of a long, storied trend in IT security.

Employees already use personal ChatGPT and Claude accounts, Copilot features embedded in familiar tools, AI functionality built into SaaS platforms, and what feels like a fresh crop of AI-powered applications every week. A good chunk of this is happening without IT or security's knowledge.

When an HR team uses an AI-powered screening tool to filter job applicants or a loan officer uses an AI assistant to evaluate applications, they're just trying to do their jobs better and faster. That makes perfect sense.

But since these high-risk scenarios are the ones Colorado and other states are targeting, it's time to figure out how to empower employees to use AI tools safely.

The first step is knowing what's happening at your organization. Visibility first:

- Once systems are inventoried, you can conduct an impact assessment.

- Once you know what's in scope, you can run a risk management program.

- Once you have a mechanism for ongoing oversight, you can demonstrate reasonable care to a regulator.

Tip

Start with behavior observability, assess your enforcement readiness, then layer on governance. A great first step is to complete a Shadow AI Assessment.

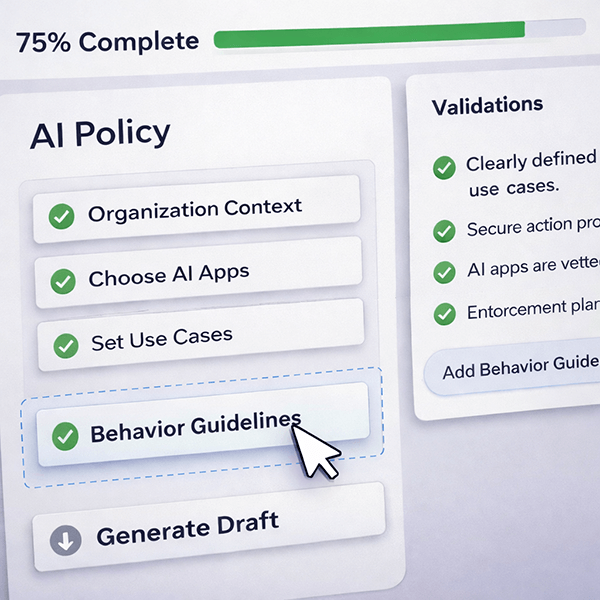

Documentation Isn't Compliance

One of the most important distinctions in all types of IT compliance, and one that gets extra sticky with AI, is the difference between having a policy and being able to enforce one.

CAIA, like many other laws, doesn't stop with asking for documentation. It requires ongoing monitoring, impact assessments updated annually and after substantial changes, and the ability to intervene and correct when systems produce outcomes that risk discriminatory harm.

The compliance infrastructure CAIA demands is operational, not just documentary (and that's good; otherwise it would have no teeth).

As you know, a well-written AI policy in a PDF doesn't satisfy a requirement for continuous monitoring. An impact assessment from last year doesn't satisfy the requirement for one completed before the next deployment. And telling a regulator what your governance program says isn't the same as demonstrating what it does.

All easier said than done (at least without the right tools).

Most organizations today have some AI usage guidelines, often inherited from broader IT or data governance policies. A few have the discovery capabilities to find shadow AI, the monitoring infrastructure to track usage at the level of detail impact assessments require, or the ability to address the policy enforcement chasm. Most don't, and that's exactly why we built Maro: to keep your workplace safe while providing security and GRC teams with visibility, context, and control.

To get where we need to go, all organizations need to have AI visibility, monitoring, and enforcement capabilities. And we do mean all: Colorado's legislation has an exemption for businesses with less than 50 employees. But it's narrow; as soon as you start customizing a model with proprietary data (which can easily happen by accident without enforceable policy), the exemption evaporates.

Understanding Frontier Models vs. Public/Open-Weights Models

In the context of these AI governance regulations and general AI security best practices, it's worth understanding (and ensuring your employees understand) the difference between engaging with a public LLM and using a frontier model.

Frontier Models (e.g., GPT-4, Claude 3 Opus), while offering maximum reasoning, advanced multimodal capabilities, and massive context windows, pose specific risks:

- High-Stakes and Complex Applications: Using these for potentially sensitive purposes (like those described in CAIA) will require strict data governance and validation protocols.

- Closed-Source: You don't get a ton of transparency into how these models work, so auditing usage for bias, data leaks, and security vulnerabilities can be tricky.

- Cloud-Hosted: Data processed by these models sits with a third-party vendor, which means you need to make sure there are clear contractual safeguards in place around data privacy, storage/location, and breach notification.

Public/Open-weight(s) Models (e.g., Llama 3, Mistral, Gemma 3, DeepSeek) offer an alternative. These models allow users to run, modify, and deploy advanced LLMs locally, ensuring data privacy and no API costs. They offer high performance on personal hardware or in private servers but still require careful management:

- Ideal for Privacy/Specialization: They are often preferred for applications involving sensitive data because they support local hosting (on-premise or within a private cloud). This ensures greater data control, residency, and privacy, significantly lowering the long-term risk of third-party data exposure.

- Lower Long-Term Costs: Local hosting also helps control operational expenditure but shifts the burden of maintenance, security patching, and compliance entirely to the organization.

- Seeding with Open Source Data: Often these LLMs are seeded with open source / publicly available data. This can present its own issues: data poisoning and backdoors, data quality and model performance issues, and privacy and legal risks if the public data contains sensitive or personal information, copyrighted material, or IP. To combat these risks, experts recommend using curated datasets, implementing rigorous validation processes, employing "machine unlearning" to remove unsafe information, and utilizing "human-in-the-loop" oversight to identify and correct biases

Employee Education (Risk-Based Usage Policy): Employees must be trained on which model types are approved for different data classifications:

- Never Use for Confidential Data: Employees should be educated on the importance of not entering proprietary, PII, or regulated data into any external Frontier Model unless explicitly approved by IT/Compliance and covered by a specific, secure contract.

- Approve Open-Weights for Sensitive Internal Use: Employees should be directed to use locally hosted, Open-weights models for internal applications that handle sensitive information, enabling the organization to (at least theoretically) maintain full control over the data environment.

Risk = Data Location: The core lesson is that data entered into a Frontier Model leaves your controlled environment, dramatically increasing data privacy and security risks. Using Open-weights models locally keeps the data in-house.

The Stakes Beyond Fines

The financial penalties are real. They're also not the only thing at risk.

Discrimination Lawsuits

AI systems that produce discriminatory outcomes in employment, lending, or housing create exposure under existing federal civil rights law, including Title VII, the Fair Housing Act, and fair lending regulations.

And since all eyes are on AI right now, the presence of AI can heighten scrutiny and damages in those cases, not reduce them. Regulators and courts are increasingly sophisticated about how AI systems can replicate and scale bias.

Attorney General enforcement actions are public, so there's no hiding if you get caught in the net. Being named in an AI discrimination proceeding, particularly for companies selling to enterprise customers, can outlast the direct financial penalty significantly.

Lost Deals

Enterprise procurement now regularly requires vendors to demonstrate AI governance capabilities through security questionnaires and vendor risk assessments.

Organizations that can't show documented compliance with applicable AI laws risk losing contracts or being excluded from procurement processes entirely. That business risk often exceeds the direct regulatory penalty.

All of that matters, no doubt, and must be taken into account as you plan for CAIA and laws to come. But there's another big set of risks to consider.

Employee Morale

As we mentioned earlier, blocking AI outright is unlikely to work. Let's say you decide to completely nix its usage at your company, at least for now, to be conservative. This is a totally understandable reflex. But the odds are good your employees won't be happy and it will have a negative effect on morale.

Moreover, they will still want to use AI to get their jobs done efficiently and may turn to their personal devices to do work-related tasks. They might then use AI outputs to make a high-risk decision, like who qualifies for a certain state benefit. If the company gets in trouble for this, is it the employee's fault?

Maybe, but it's a gray zone. Blaming an employee for making an impossible choice isn't good for morale, either.

Back to our earlier point about total abstention as a losing strategy, we don't want to set employees up to fail. It's much better to provide them with clear guardrails around AI use and implement real-time safeguards. This sets them (and you) up for success.

Compliance as a Floor, Not a Ceiling:

Ethical and Moral Considerations

Here's another familiar story in the world of privacy and security: Meeting compliance or legal requirements doesn't mean you've gotten all the way to “private” and “secure.” For one, in the age of CI/CD supercharged by AI, technology does evolve faster than regulations possibly could.

Beyond that fact, it's nearly impossible for regulators and framework creators to think through every possible way that data and systems can be misused and abused. It's up to us as the builders and users of these systems and the handlers of this data to understand the implications of what we're doing.

Meeting compliance doesn't necessarily mean you're actually preventing all harm and ensuring true privacy and security. There's no such thing as perfection, but the goal should always be harm reduction and continuous improvement.

Compliance is a solid baseline, but we know your goal is to do the right thing by your employees, customers, partners, users, etc. A walk-crawl-run approach might look like this:

|

Getting Started |

Meeting Compliance |

Assuring Privacy and Security |

|---|---|---|

|

Well-written AI policy in PDF form |

Continuous monitoring of policy adherence |

|

|

Completed impact assessment |

Impact assessment before any new deployments |

|

|

Documented governance program |

Demonstrable governance program |

|

Laws ask you to go beyond documenting to operationalizing. Achieving true security and privacy (and continuously improving) asks you to go beyond theoretical operations to a modern security mindset. That means treating employees as autonomous, capable humans who benefit from guardrails and guidance and treating those affected by technology decisions as humans with fundamental rights.

There's a hierarchy of needs here:

1. People affected by AI usage at these orgs need and deserve to have their data security, privacy, and humanity respected

2. People employed by these orgs need to do their jobs well and efficiently (and today that often means using AI) without causing harm

3. People leading compliance and security at these orgs need to comply with the law and stay out of trouble

A strong AI governance program centers real humans and achieves all of these goals.

Where to Go From Here: June is Soon, but Now is Now

The AI regulatory picture is complex. The strategic direction is clear. The human directive is inarguable. To recap:

- NIST and ISO alignment is a strong bet regardless of how federal preemption debates resolve.

- State-level AI governance regulations are accelerating, not slowing.

- New technical infrastructure is required to comply: from full AI discovery to continuous, comprehensive monitoring to adaptive policies to real-time enforcement.

- We must aim to go beyond compliance all the way to true security and privacy.

- People need clear guidance and guardrails.

To this last point, we're well aware that this is a tall order. Fortunately, there are ways to “fight fire with fire” so to speak. You can dramatically reduce the effort of designing and implementing complex AI security and responsible usage policies (not to mention enforcement mechanisms) by bringing in cognitive security agents. These can protect employees and customers directly in-browser, provide real visibility into human risk vectors, enable you to deploy real-time interventions and guidance, and measure what's working so you can scale your program.

The organizations best positioned for this tidal wave of AI regulation are the ones looking even beyond June 2026 to focus on ideal outcomes (continuous, demonstrable, real privacy and security) and investing in the technical infrastructure to achieve those outcomes.

Colorado's June 30 deadline is just five months away, but the human risks and needs are already here today. It's go time!

Need to stay on top of AI regulations, security risks, and ways to protect your organization? Sign up for Maro's newsletter below to get need-to-know AI Governance Regulations and Guidance resources dropped right into your inbox.

Dive Deeper into Human-Centric AI Governance

AI Governance and Guidance, Delivered

Join security leaders receiving practical frameworks, informed perspective, and trusted industry takes straight to your inbox. Unsubscribe anytime.

FAQs About U.S. State AI Regulations

Is NIST AI RMF or ISO 42001 legally required?

No. NIST AI RMF is a voluntary framework. No federal law mandates it. That said, several state laws, including Colorado's CAIA and Texas's TRAIGA, provide explicit safe harbor protection for organizations that align with it. In practice, that makes NIST alignment close to a de facto requirement for any organization deploying high-risk AI in those states. ISO 42001 is also not formally required, but compliance provides companies with proof that can be very useful in legal and business contexts. Learn more about both here. [Link to NIST/ISO blog here]

What's the difference between NIST AI RMF and ISO 42001?

NIST AI RMF is a flexible, risk-focused playbook best suited for technical teams building and deploying AI. ISO 42001 is a formal, certifiable management system best suited for demonstrating compliance to external parties, like enterprise customers, regulators, and auditors. Most mature organizations will want both. Learn more. [Link to NIST/ISO blog here]

What is algorithmic discrimination?

Algorithmic discrimination occurs when an AI system produces outcomes that unlawfully disadvantage people based on protected characteristics like race, gender, religion, disability, or national origin. It doesn't require intent. A system can discriminate through patterns in training data, flawed model design, or misapplication in context. This is the central harm that Colorado's CAIA, and most other state AI laws, are designed to prevent.

What is an AI impact assessment?

An impact assessment is a formal evaluation of an AI system documenting its purpose, deployment context, data inputs and outputs, potential risks of algorithmic discrimination, and mitigation strategies. Under Colorado's CAIA, deployers must complete one before deployment, annually, and within 90 days of any substantial modification. These assessments need to be retained for three years and made available to the Attorney General on request.

Does Colorado's AI Act apply to generative AI?

It depends on how it's used. CAIA doesn't regulate generative AI specifically. It regulates AI systems that make or substantially influence consequential decisions. If a generative AI tool is being used to help screen job applicants, evaluate loan applications, or make healthcare recommendations, it likely qualifies as high-risk under the law. If it's being used for drafting emails or summarizing documents, it probably doesn't.

What should we do first to prepare for Colorado's AI Act?

Start with discovery. Inventory every AI system your organization uses, including tools your employees are using independently. You can't assess, govern, or disclose AI you don't know about. From there, build your risk management program aligned with NIST AI RMF, conduct impact assessments on in-scope systems, and put monitoring infrastructure in place. The documentation ideally comes last, not first.

What is "high-risk AI" under Colorado's AI Act?

Colorado's AI Act defines high-risk AI as any system that makes or substantially influences a consequential decision affecting a Colorado resident. Consequential decisions include those related to employment, housing, healthcare, financial services, education, insurance, legal services, and government services. If your AI touches any of those areas and your company does business in Colorado, you're likely in scope.

What happens if we're not compliant by June 30, 2026?

Colorado's Attorney General can issue a notice of violation. After that, you have a 60-day cure window to fix the issue. If you don't, penalties begin stacking at up to $20,000 per violation, counted per affected consumer. A single compliance failure affecting a large consumer population can add up to significant exposure quickly.

Can we just use NIST AI RMF to satisfy Colorado's requirements?

Yes, NIST AI RMF alignment creates a rebuttable presumption of "reasonable care" under Colorado's law. That's meaningful legal protection. ISO 42001 also satisfies Colorado's safe harbor. Either works, but NIST is typically faster to implement and more immediately operational, making it the practical first step for organizations under time pressure.

What is shadow AI, and why does it matter for compliance?

Shadow AI refers to AI tools and systems being used within an organization without official IT or security oversight. Think personal ChatGPT accounts, AI features embedded in SaaS tools, or consumer AI apps used for work tasks. It matters for compliance because Colorado's new AI law requires organizations to assess, govern, and monitor their AI systems. You can't do any of that for systems you don't know exist. Shadow AI is one of the most common reasons organizations find themselves unable to meet compliance requirements even when they have governance programs in place.

Are we responsible for AI systems we buy from vendors, or just ones we build?

Both, but in different ways. Under Colorado's CAIA, "developers" are entities that build or substantially modify AI systems, and "deployers" are entities that use them to make consequential decisions. Both have legal obligations, but the heavier governance burden falls on deployers.

If your organization uses a vendor-built AI system to make decisions about hiring, lending, housing, or healthcare, you're a deployer. You're responsible for your own risk management program, impact assessments, consumer disclosures, and ongoing discrimination monitoring. The vendor can't absorb that responsibility for you.

What vendors are required to do is give you what you need to do your job. Developers must provide documentation like model cards and known risk disclosures to help deployers complete their impact assessments. So your vendor contracts should reflect this: make sure your AI vendors are obligated to keep you informed about how their systems work, what risks they've identified, and if they discover discrimination issues after deployment.

We're a small company. Do these laws apply to us?

Potentially. Colorado's AI Act does include a narrow exemption for companies with fewer than 50 full-time employees, but only if they don't use their own data to train or fine-tune the AI system. If you're customizing a model with proprietary data, that exemption disappears. And the other obligations, including consumer disclosures and basic governance documentation, still apply in many scenarios regardless of company size.

About Maro

Maro helps organizations discover shadow AI, align with NIST AI RMF, and enforce AI usage policies in real time, giving security and compliance teams the visibility they need to meet Colorado's requirements and stay ahead of the regulatory landscape that follows. We’re your partner for balancing business goals with respect for human autonomy and rights. Learn more at seekmaro.com.