NIST AI RMF vs. ISO 42001: Comparing Frameworks for AI Privacy and Security

Jadon Montero

//

March 13, 2026

Jadon Montero

//

March 13, 2026

The Big Idea

- Two frameworks dominate AI governance right now: NIST AI RMF and ISO/IEC 42001. If you're building a defensible AI program, you need to understand both.

- They're not competing standards, they're complementary ones: NIST AI RMF is an operational playbook for managing AI risk day-to-day. ISO/IEC 42001 is a certifiable management system for proving governance to external parties. Most organizations will need both.

- Sequencing matters: NIST's framework is typically the right first move for U.S. organizations, especially under time pressure. It's faster to implement, operationally focused, and explicitly referenced as a safe harbor in Colorado's CAIA and Texas's TRAIGA. ISO's framework builds on that foundation and adds external and international credibility.

- Neither framework works without granular usage visibility first: You can't govern AI you don't know about. Before either standard becomes actionable, you need a complete picture of what AI is running across your organization and how it's being used.

A Federal Vacuum Filled by States

The federal approach to AI regulations has so far been patchy at best. One administration rolls out a directive. The next rolls it back. Older anti-discrimination laws are called on to make decisions about legal usage of AI. Case law grows. But a comprehensive national bill to govern fair use of AI in the U.S.? Not a horse we're betting on in the short term.

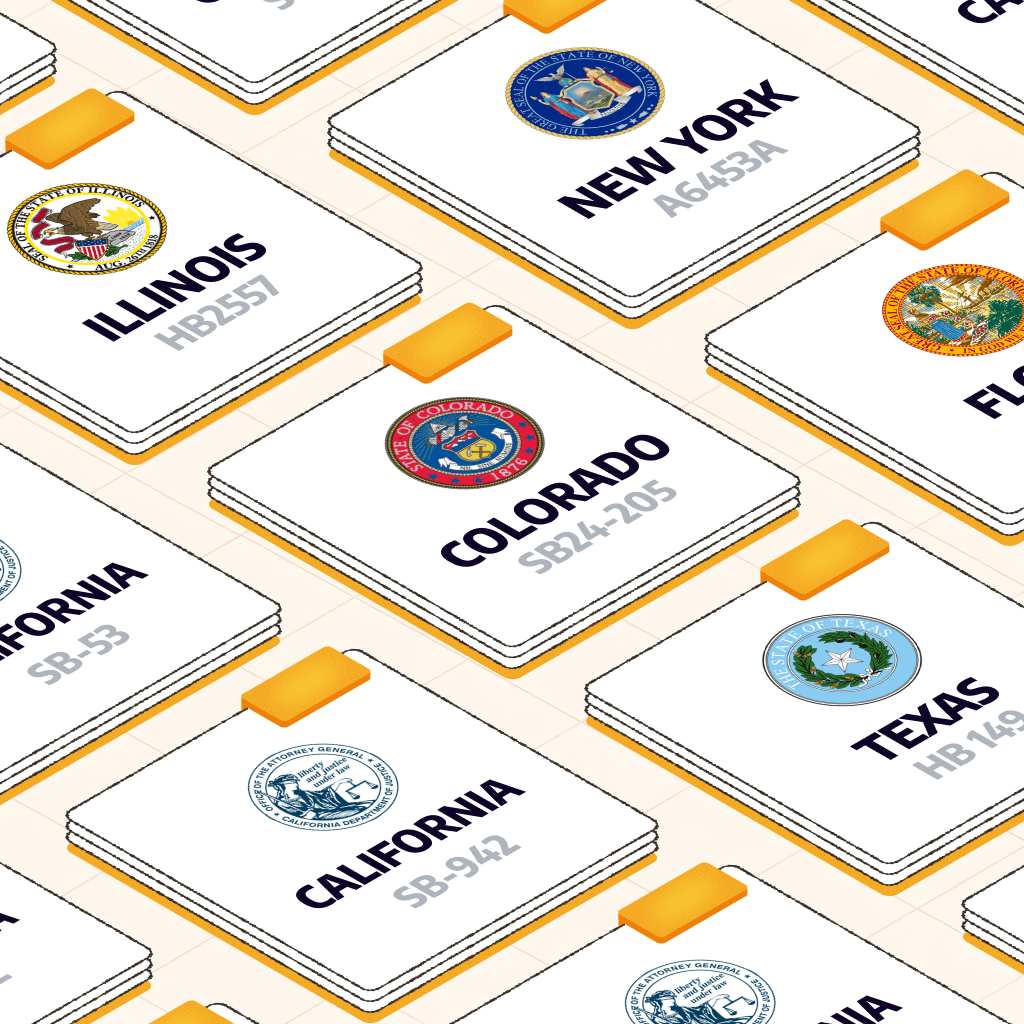

Meanwhile, states have taken matters into their own hands, just as they did with data privacy laws. Most notably, Colorado's SB24-205 (a.k.a. CAIA), goes into effect June 30, 2026. It's not the first, and it certainly won't be the last, but it is the most comprehensive AI law to be passed so far in this country.

CAIA focuses on AI usage that impacts decisions that affect consumers around hiring, lending, healthcare, insurance, education, and housing. In other words, it's designed to prevent AI-driven discrimination that could materially impact people. It's a great place to start minimizing potential harm without trying to outlaw AI.

People are going to use AI. Organizations are going to use AI. We couldn't put the genie back in the bottle, even if we wanted to, so let's minimize harm.

CAIA provides for some serious penalties for infractions, including up to $2,000 per incident (i.e., per person affected). With enforcement set to begin in (checks watch) less than 5 months, many organizations are scrambling to figure out how to get compliant, and fast. (P.S. You can calculate your potential fine exposure here.)

With CAIA being just one of several state AI laws, compliance can look daunting at first glance. But there's good news here.

Insight

The throughline across all these laws: NIST AI RMF alignment is quickly becoming both the go-to recommendation from states and the safe harbor mechanism of choice.

A Tale (Almost) as Old as Time

If this sounds familiar, it's because this has happened before.

The NIST Cybersecurity Framework (NIST CSF) was introduced as voluntary guidance in 2014. Over the next decade, it became the de facto standard referenced in state laws, federal guidance, and court decisions defining what "reasonable care" looks like in cybersecurity.

The same pattern is playing out now with AI:

- Colorado's AI law offers a rebuttable presumption of reasonable care for NIST AI RMF or ISO 42001 alignment.

- Texas provides an affirmative defense to those complying with NIST

- California's regulations reference NIST and ISO as compliance benchmarks.

Even if specific state laws face federal preemption challenges, NIST alignment holds up as legal protection and positions organizations well for whatever follows. And ISO compliance certification will put you in a solid position to prove yourself whenever necessary, from the courtroom to the enterprise sales floor.

NIST AI RMF vs. ISO 42001: What You Need to Know

So, let's walk through NIST AI RMF (and its cousin ISO 42001) to understand how these frameworks intersect with each other and with state laws. We'll cover how you can use them to protect your organization and the people impacted by your AI usage.

What Exactly is the NIST AI RMF?

First, let's break it down. NIST is the National Institute of Standards and Technology. NIST was founded all the way back in 1901 with the goal of boosting U.S. competition in the world market by improving our measurement systems. (Since we decided to break the mold and eschew that whole metric system thing…)

Over time, it has come to own not just measurement but critical standards that define how we use science and technology, with the goal of improving economic and social outcomes.

Though it is a government organization, NIST does not technically pass laws. Some of its frameworks are defined in part by laws and policies, but frameworks like CSF and AI RMF are not legally binding. They are technical guidelines and best practices to follow to reduce harm and ensure fairness.

For those of us in the tech sector, NIST has become well-known for frameworks across cybersecurity, privacy, risk management, and much more.

It's no surprise that NIST has stepped in to provide guidance on fair use of AI. And, again, no surprise that states have turned to NIST to build their own laws and offer guidance for organizations aiming to comply.

The NIST Artificial Intelligence Risk Management Framework (first published in 2023) organizes AI governance across four functions:

- Govern: Establishing policies, accountability structures, and risk culture

- Map: Identifying and categorizing AI risks in context

- Measure: Analyzing and assessing those risks

- Manage: Responding to, monitoring, and documenting risk over time

Companies that build their AI programs around these functions will be well-positioned across the current and likely future landscape of state laws.

The Cyber AI Profile

In December 2025, NIST also released a companion framework: the Cybersecurity Framework Profile for AI (the Cyber AI Profile). This one specifically covers how to secure AI systems from attacks (Secure), use AI in cyber defense (Defend), and defend against AI-enabled threats (Thwart). It's not yet finalized, but it's worth watching closely.

Worth noting: Cyber insurers are expected to move toward requiring Cyber AI Profile alignment, just as they now require CSF compliance.

To sum it up, NIST AI RMF sets organizations up well to meet current and future state AI legislation. Cyber AI Profile will also be key for organizations looking to defend against AI attacks, use AI in defense, and obtain cyber insurance to protect their interests.

What Exactly is ISO/IEC 42001?

ISO stands for the International Organization for Standardization, a non-governmental body founded in 1947 with representatives from standards organizations in over 160 countries. It's basically NIST, but make it global. (The IEC, which you'll sometimes also see referenced, is the International Electrotechnical Commission; the two work together frequently on joint efforts like 42001. In this article we'll refer to the framework simply as ISO 42001 for simplicity.)

ISO develops and publishes international standards across virtually every industry, from food safety to information security. If you've ever worked with ISO 27001 (information security management) or ISO 9001 (quality management), you already know how these standards work in practice.

ISO standards are different from NIST frameworks in one important way: they're certifiable.

Organizations can engage an accredited third-party auditor to formally assess their compliance and issue a certificate. That certificate is internationally recognized and carries weight with regulators, enterprise customers, and partners in a way that self-attestation doesn't.

ISO/IEC 42001, published in December 2023, is the first international standard specifically designed for AI management systems. It gives organizations a structured, auditable framework for governing AI responsibly across the full lifecycle, from development through deployment and ongoing operation.

The standard is built around a Plan-Do-Check-Act (PDCA) model, the same continuous improvement structure used by ISO 27001. If your organization already has an ISO 27001 program, the ISO 42001 process will feel familiar, and there's meaningful overlap between the two that reduces implementation effort. (Insert sigh of relief here.)

ISO 42001 covers:

- Organizational governance: Defining roles, responsibilities, and accountability structures for AI oversight

- Risk management: Identifying, assessing, and mitigating technical, operational, and ethical risks across AI systems

- Ethical principles: Ensuring AI systems are transparent, fair, and respectful of privacy and human rights

- Continuous improvement: Regularly reviewing and updating AI management practices as systems and regulations evolve

For organizations operating internationally, ISO 42001 certification is particularly valuable. ISO standards are widely recognized across the EU, UK, Asia-Pacific, and beyond, and they're increasingly referenced in international procurement and vendor assessment processes.

It's also worth noting that some compliance assessors now offer integrated audits that cover ISO 42001, NIST AI RMF, and the EU AI Act simultaneously, which can significantly reduce the time and cost of achieving alignment across frameworks.

In the context of U.S. state laws, ISO 42001 is explicitly named alongside NIST AI RMF as a safe harbor mechanism in Colorado and California. Either framework can satisfy the "reasonable care" standard.

For organizations that want the most defensible compliance posture, especially those selling to enterprise customers who will ask for proof, ISO 42001 certification is a meaningful differentiator.

NIST AI RMF vs. ISO 42001: Subtle but Key Differences

NIST AI RMF and ISO 42001 are often referenced in the same breath, and for good reason. They have a lot of overlap, and many state laws reference both as safe harbor mechanisms.

But they're not the same thing, and understanding the distinction matters.

The short version:

- NIST AI RMF is a flexible playbook for managing AI risk.

- ISO 42001 is a formal, certifiable management system.

Important

Most mature organizations will want both. Let's examine the differences in a bit more detail.

Certification

This is the biggest practical difference. ISO 42001 compliance is certifiable by an accredited third-party auditor. Organizations that complete the process get a formal certificate they can show to regulators, customers, and partners. On the other hand, NIST AI RMF has no certification mechanism.

Organizations can align with it, implement it, and even have their practices audited against it, but there's no external body issuing a certificate. It relies on self-attestation.

For procurement and enterprise sales, that distinction is real. Saying you're "NIST-aligned" and having an ISO 42001 certificate are different kinds of proof.

Structure

ISO 42001 follows a Plan-Do-Check-Act (PDCA) model, the same structure used by ISO 27001 for information security. If your organization already has an ISO 27001 program, ISO 42001 will feel familiar. It's a top-down management system, built around organizational governance, documentation, and continuous improvement cycles.

NIST AI RMF, by contrast, is organized around four functions: Govern, Map, Measure, and Manage. It's more of a bottom-up technical playbook, designed to give risk practitioners and engineering teams concrete guidance for identifying and managing AI-specific risks throughout the AI lifecycle.

It's flexible by design, which makes it easier to tailor to your specific context, but also means there's more room for interpretation.

Focus

ISO 42001 is management-first. It's designed to help organizations establish and maintain an AI Management System (AIMS), covering:

- Governance structures

- Roles

- Accountability

- Ethical oversight

NIST AI RMF is risk-first. Its core goal is helping organizations build trustworthy AI by mapping, measuring, and managing risks at the technical level. It emphasizes fairness, transparency, security, and accountability, but approaches those goals through the lens of risk identification and mitigation rather than organizational management.

Which one should you focus on?

ISO for Proof

ISO 42001 is the better choice for demonstrating compliance to regulators, customers, and enterprise partners. If you're responding to security questionnaires, pursuing international markets, or trying to show the board or shareholders you have a formal AI governance program, ISO 42001 certification is the more compelling credential.

NIST for Best Practices

NIST AI RMF is the better operational tool for the teams actually building and deploying AI systems. It provides actionable guidance and technical best practices that engineers and risk practitioners can implement, and it maps directly to the safe harbor provisions in Colorado, Texas, and California's laws (and more).

Overlap for the Win

Good news: Most organizations won't have to choose. Why? The two frameworks are designed to be complementary, and using both is increasingly considered best practice.

A common approach:

- Use ISO 42001 as the overall governance structure, establishing the management system, accountability structures, and continuous improvement processes.

- Use NIST AI RMF to provide the day-to-day operational controls your teams need.

There's also meaningful overlap between the frameworks, which reduces the implementation burden. (A huge relief, we know!) Controls and documentation that satisfy NIST AI RMF requirements will often map directly to ISO 42001 requirements.

Even better? Many compliance tools and auditors now offer integrated assessments that cover both simultaneously. Two birds, one stone. (Actually, once you think about all the regulations these provide safe harbor for, it's more like a whole flock of birds with one stone… Not to get too gruesome.)

So it's less a question of pitting NIST AI RMF vs. ISO 42001 and choosing just one and more a matter of understanding the overlap and applying each where it make the most sense for your organization.

OK, OK, but what should I do right now?

For organizations preparing for Colorado's June 30 deadline specifically: NIST AI RMF alignment is a practical first step, since Colorado's safe harbor is framed around it and it's operational enough to implement quickly. ISO 42001 certification takes longer and involves a formal audit process, but it's a worthwhile longer-term investment for organizations that want the most defensible compliance posture.

NIST AI RMF vs. ISO 42001: A Quick Visual Rundown

|

|

NIST AI RMF |

ISO/IEC 42001 |

|---|---|---|

|

Developed By |

U.S. government |

Delegates from 25 countries |

|

Used By |

U.S. organizations and those doing business with U.S. orgs |

160+ countries globally |

|

Structure |

Flexible playbook |

Formal, certifiable management system |

|

Best Used As |

Day-to-day controls |

Governance structure |

|

Auditable |

Yes, informally (no standardized process or accreditation body) |

Yes (standardized and accredited) |

|

Certifiable |

Self-attestation only |

Yes, officially by a third-party |

|

Focus |

Risk-first, technically focused |

Management-first, organizationally focused |

|

Best For |

Ensuring technical alignment with state regulations and industry best practices |

Proving compliance to auditors, customers (especially enterprise, international, highly regulated), legal bodies |

|

Safe Harbor |

Yes, in most states |

Yes, in most states (but NIST is more commonly referenced because it is a domestic framework) |

|

Speed of Alignment |

Faster, highly operational |

Slower, certification requires formal audit process |

|

Primary Users |

CTOs, engineers, risk practitioners (but much overlap) |

Governance and compliance pros (but much overlap) |

|

Overlap |

Significant, and increasingly overlapping assessments available |

Significant, and increasingly overlapping assessments available |

Where Does This Leave You?

AI regulation in the U.S. is moving fast, and it's only going to accelerate. The good news is that NIST AI RMF and ISO 42001 give organizations a real path forward, one that holds up across state lines, in enterprise procurement, and in a courtroom, if it ever comes to that.

Savvy organizations aren't waiting for federal clarity or a single definitive law. They're building governance programs now, aligned to frameworks that regulators already trust. And they're looking beyond the legal and business compliance "floor" to focus on real risk to real people.

Start with observability. You can't assess, govern, or disclose AI systems if you don't know about them or how your workforce is using them. From there, NIST AI RMF gives you the operational foundation to move quickly toward Colorado's June 30 deadline. ISO 42001 certification is the longer play, but it's the one that opens the most doors.

The window is narrowing, but we're lucky these helpful frameworks exist, and the path forward is clear enough for action. We've got this!

Not sure what AI is being used across your organization? Start with a Shadow AI Assessment. Gaining visibility is the first step to achieving compliance and doing right by the people who trust you with their data.

Dive Deeper into Human-Centric AI Governance

AI Governance Guidance, Delivered

Join security leaders receiving practical frameworks, informed perspective, and trusted industry takes straight to your inbox. Unsubscribe anytime.

FAQs About NIST AI RMF vs. ISO 42001

Is NIST AI RMF legally required?

No. NIST AI RMF is a voluntary framework. No federal law mandates it. That said, several state AI laws, including Colorado's AI Act (CAIA) and Texas's TRAIGA, provide explicit safe harbor protection for organizations that align with it. In practice, that makes it close to a de facto requirement for any organization deploying high-risk AI in those states.

Is ISO 42001 legally required?

Also no. ISO 42001 is a voluntary international standard. But like NIST AI RMF, it's named as a safe harbor mechanism in Colorado and California, and it carries significant weight in enterprise procurement and international regulatory contexts. Organizations seeking the most defensible compliance posture will want to pursue certification.

What's the difference between NIST AI RMF and ISO 42001?

The short version: NIST AI RMF is a flexible, risk-focused playbook best suited for technical teams. ISO 42001 is a formal, certifiable management system best suited for demonstrating compliance to external parties. Most mature organizations will want to use both. See the full comparison table above for a detailed breakdown.

What is "high-risk AI" under Colorado's AI Act?

Colorado's AI Act defines high-risk AI as any system that makes or substantially influences a consequential decision affecting a Colorado resident. Consequential decisions include those related to employment, housing, healthcare, financial services, education, insurance, legal services, and government services. If your AI touches any of those areas and your company does business in Colorado, you're likely in scope.

What happens if we're not compliant by June 30, 2026?

Colorado's Attorney General can issue a notice of violation. After that, you have a 60-day cure window to fix the issue. If you don't, penalties begin stacking at up to $20,000 per violation, counted per affected consumer. A single compliance failure affecting a large consumer population can add up to significant exposure quickly.

Can we just use NIST AI RMF to satisfy Colorado's requirements?

Yes, NIST AI RMF alignment creates a rebuttable presumption of "reasonable care" under Colorado's law. That's meaningful legal protection. ISO 42001 also satisfies Colorado's safe harbor. Either works, but NIST is typically faster to implement and more immediately operational, making it the practical first step for organizations under time pressure.

What is shadow AI, and why does it matter for compliance?

Shadow AI refers to AI tools and systems being used within an organization without official IT or security oversight. Think personal ChatGPT accounts, AI features embedded in SaaS tools, or consumer AI apps used for work tasks. It matters for compliance because Colorado's new AI law requires organizations to assess, govern, and monitor their AI systems. You can't do any of that for systems you don't know exist. Shadow AI is one of the most common reasons organizations find themselves unable to meet compliance requirements even when they have governance programs in place.

Do these frameworks apply to AI we buy from vendors, or just AI we build?

Both. Colorado's law applies to "deployers," which includes any organization using a high-risk AI system to make consequential decisions, regardless of whether they built it or licensed it from a third party. If you're using an AI-powered hiring tool or loan decisioning system from a vendor, you're still on the hook for compliance. Vendor contracts should reflect this, and you'll want documentation from your vendors to support your own impact assessments.

We're a small company. Do these laws apply to us?

Potentially. Colorado's AI Act does include a narrow exemption for companies with fewer than 50 full-time employees, but only if they don't use their own data to train or fine-tune the AI system. If you're customizing a model with proprietary data, that exemption disappears. And the other obligations, including consumer disclosures and basic governance documentation, still apply in many scenarios regardless of company size.

About Maro

Maro helps organizations align with NIST AI RMF and ISO 42001 and enforce AI usage policies in real time, giving security and compliance teams the visibility they need to meet requirements and stay ahead of the regulatory landscape to come. We're your partner for balancing business goals with compliance needs. Learn more at seekmaro.com.