AI Regulation in the US: The Federal vs. State Divide

Jadon Montero

//

April 9, 2026

Jadon Montero

//

April 9, 2026

In the span of two weeks, the White House released a national AI policy framework, a Republican senator introduced draft federal AI legislation, and progressive lawmakers counter-proposed a data center moratorium. None of these will be the final word on AI regulation in the U.S., but together, they signal a clear directional shift that security and GRC teams can't afford to ignore.

The bottom line: the U.S. is moving toward federal preemption of state AI laws, and the current federal approach doesn't give enterprises much to work with.

Recent Events

The Trump administration has been methodically building the case for federal control over AI policy since late 2025. A December executive order directed the DOJ to challenge state-level AI regulations and tied federal funding to state compliance. This March, that direction crystallized into proposed legislation.

Senator Marsha Blackburn's TRUMP AMERICA AI Act, released March 18, proposes a single national AI rulebook, preempting the patchwork of state laws that have been developing over the past few years. It focuses on child safety, creator rights, anti-bias provisions, and federal research infrastructure. Two days later, interestingly enough, the White House released its own formal policy framework reinforcing many of the same priorities: limit state-level fragmentation, reduce legal uncertainty, and keep AI development moving.

On the other side, Representatives AOC and Senator Sanders introduced a bill to pause new data center construction until national safeguards are in place, a measure that's unlikely to advance in the near term, given Republican control of Congress, but which reflects a significant and growing constituency of concern about AI's infrastructure, labor, and environmental impacts.

Meanwhile, Colorado's SB24-205 (still the most comprehensive state AI law on the books) is scheduled to begin enforcement in June 2026, though many expect another delay given the federal pressure building around it.

Do you think Colorado's AI law will go into effect in June as currently planned?

The Problem With Where We're Headed

Federal preemption of state AI laws isn't inherently bad. A single national standard would reduce compliance complexity for organizations operating across states. That's a genuine benefit!

But here's what the current federal approach is missing: technical standards with teeth.

Neither the White House framework nor the Blackburn bill establishes a safe harbor aligned to recognized frameworks like NIST AI RMF or ISO 42001. The Blackburn bill references high-risk AI systems by domain and defines frontier AI by compute threshold, but does not provide an enumerated classification taxonomy, leaving organizations to self-classify with material liability exposure for incorrect determinations.

The bill creates a duty of care for developers, classifies AI systems as products, and opens multiple enforcement pathways (FTC, Attorney General, state AGs, private action), but does not map role-differentiated obligations across the AI supply chain from foundation model provider to deployer to enterprise end-user.

What that means in practice is that governance accountability shifts directly to organizations large and small, without a clear map for what "good" looks like. Responsibility for safe and effective AI use becomes something closer to the honor system. However, several state laws (Texas TRAIGA, California AB 2013) already offer safe harbor for organizations implementing NIST AI RMF or ISO 42001, and the Blackburn bill’s codification of NIST’s AI standards role signals these frameworks will likely anchor future federal compliance expectations.

Compare that to state approaches like Colorado's SB24-205, which defines high-risk AI through its impact on consequential decisions around employment, housing, healthcare, credit, etc., and places explicit accountability on both developers and corporate users. It's more prescriptive, more complex to navigate, but questions still remain around its enforceability and effective timeline.

The federal approach, as it stands, is broader in scope but lighter in obligation and guidance. Organizations should treat NIST AI RMF alignment as the current defensible standard of care and build accountability chains, classification taxonomies, and audit readiness independently of federal action.

The notable distinctions between the two approaches, as we've unpacked:

|

Category |

State Approaches |

Federal Policy Approach (Current Direction) |

Org Position |

|---|---|---|---|

|

Regulatory Philosophy |

Prescriptive, risk-based with safe harbor tied to NIST/ISO frameworks |

Light-touch, pro-innovation; defers to FTC rulemaking and voluntary NIST guidance |

Implement NIST AI RMF now; treat state safe harbors as compliance floor |

|

Scope of Impact |

Broad definition of harm tied to consequential decisions (employment, housing, healthcare, finance, etc.) |

Narrower scope focused on protected populations (children, creators, communities) |

Any AI output materially influencing employment, credit, housing, healthcare, or benefits = high-risk |

|

Accountability Model |

Clear accountability placed on creators and corporate users of AI systems |

Developer duty of care + product liability; no role differentiation across supply chain |

Map supply chain per system; RACI matrix for lifecycle stages; allocate in vendor contracts |

|

Risk Definition |

Formal classification of "high-risk AI systems" with defined obligations |

High-risk domains referenced but no taxonomy introduced; orgs self-classify with liability exposure |

Implement flexible taxonomy systems to account for shared standards (something like EU Annex III) + Blackburn domains; quarterly review board |

|

Consumer Protection |

Explicit focus on bias, discrimination, and individual impact |

Broader, less prescriptive protections; targeted priorities (e.g., child safety) |

Bias program covering protected-class and algorithmic fairness; audit-ready documentation now |

What This Means for Your Team

Depending on your company's primary product or service, you may be disproportionately affected as AI regulations are beginning to define what must be protected (and who is accountable) based on how your business uses and delivers AI.

The scope of AI risk is also expanding well beyond traditional security concerns to include content integrity and bias, intellectual property, platform liability, and infrastructure impacts. GRC and security teams are increasingly pulled into strategic oversight of these areas, whether that's formally defined in your org chart or not.

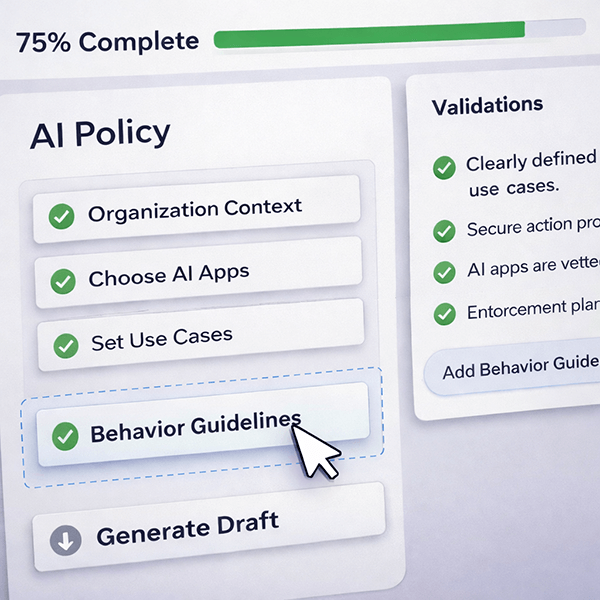

That means tighter cross-functional alignment is becoming a practical necessity, not just a best practice. Security, Legal, IT, HR, and Product all have a stake in how AI is governed. And the policies that matter aren't just what your organization has written down; they're what's actually happening and with the tools your teams are using every day.

A few things worth noting as you think about where to focus:

- Existing frameworks are the best proxy for standards today. NIST, ISO, and emerging industry consortiums like AIUC-1 are the closest thing we have right now to a recognized baseline for AI governance. Aligning to these frameworks is the most defensible position available to companies while the regulatory environment continues to develop.

- Start documenting your standard of care now. The Blackburn bill’s duty-of-care standard will be defined by FTC rulemaking, but courts will evaluate what constituted “reasonable care” based on industry practice. Organizations that can demonstrate alignment with NIST AI RMF, ISO 42001, or both will be better positioned to argue they met the standard.

- Market forces may formalize what regulation hasn't, particularly cyber insurers. We're seeing early signals that insurers are beginning to define de facto governance requirements as part of underwriting AI risk. It's another signal that the market is trying to catch up to rapid technological advancements. Insurers will likely align with industry frameworks as the best proxy, too. We'll be keeping an eye on this.

- This won't resolve quickly. Anyone who tells you the regulatory picture will be clear by end of year is being optimistic. AI development may be moving into its terrible twos, but it's still young and developing faster than any technology we've seen before. Plan for continued uncertainty, which means building governance infrastructure that's adaptable, not just compliant with a snapshot in time.

Don't Wait for Perfect Clarity

Historically, states have been the ones to move first on emerging tech regulation. Privacy law followed that pattern. AI may follow a similar trajectory with state-level pressure pushing the federal government toward action. The difference today: regulatory approaches are vastly different and federal preemption appears likely to win this one. But the federal framework that's taking shape doesn't give security and GRC teams much to build on yet.

That's not a reason to wait. It's a reason to start now by grounding your governance approach with trusted frameworks that already exist. NIST AI RMF and ISO 42001 provide strong foundations for early governance maturity as the landscape evolves. While federal policy leaves much of the responsibility to the private sector, these frameworks offer clear guidance on what matters via impact assessments, defining high-risk systems, continuous monitoring, and more granular enforcement... All hallmarks of a mature security program.

Compliance is a starting point, not the destination. And investing now can meaningfully reduce your risk surface and build the resilience needed for what comes next.

Want to learn more about where your organization stands with AI security today? Take our Shadow AI Assessment and get your NIST AI RMF readiness roadmap.

Dive Deeper into Human-Centric AI Governance

AI Governance Guidance, Delivered

Join security leaders receiving practical frameworks, informed perspective, and trusted industry takes straight to your inbox. Unsubscribe anytime.

Frequently Asked Questions

What is federal preemption of state AI laws?

Federal preemption means a national AI law would override existing and future state-level AI regulations, replacing the current patchwork of state laws with a single federal standard. The Trump administration has been actively pursuing this through executive action and proposed legislation, arguing it reduces compliance complexity and legal uncertainty for businesses operating across multiple states.

What is the TRUMP AMERICA AI Act?

The TRUMP AMERICA AI Act is a federal AI bill introduced by Senator Marsha Blackburn in March 2026. It proposes a single national AI rulebook that would preempt state AI laws, with a focus on child safety, creator rights, anti-bias provisions, and federal AI research infrastructure. It does not establish a formal classification system for high-risk AI or define a compliance baseline for enterprises.

What is Colorado's SB24-205 and will it take effect in June 2026?

Colorado's SB24-205 is currently the most comprehensive state AI law in the U.S. It defines high-risk AI systems based on their use in consequential decisions (e.g., employment, housing, healthcare, credit) and places explicit accountability on both AI developers and corporate users. Enforcement is scheduled to begin June 2026, though many expect another delay given increasing federal pressure to preempt state-level regulation.

What's missing from the current federal AI governance approach?

The current federal approach lacks technical standards with enforcement teeth. Neither the White House policy framework nor the Blackburn bill establishes a safe harbor tied to recognized frameworks like NIST AI RMF or ISO 42001, a formal classification of high-risk AI systems, or a defined accountability chain from developer to deployer to enterprise. Without these, governance responsibility falls to organizations without a clear map for what "good" looks like.

Which AI governance frameworks should enterprises align to right now?

In the absence of enforceable federal standards, NIST AI RMF and ISO 42001 are the most defensible baselines available. Both provide structured guidance on impact assessments, high-risk system classification, continuous monitoring, and accountability; all hallmarks of a mature AI governance program. Emerging industry consortiums like AIUC-1 are also worth watching as the standards landscape continues to develop.

How should security and GRC teams prepare for AI regulation uncertainty?

Build governance infrastructure that's adaptable, not just point-in-time compliant. That means aligning to established frameworks (NIST AI RMF, ISO 42001), fostering cross-functional alignment across Security, Legal, IT, HR, and Product, and monitoring emerging signals like cyber insurer underwriting requirements. The regulatory picture won't be clear by year-end. The teams that invest now will be better positioned regardless of which direction federal policy settles.

About Maro

Maro is a cognitive security agent and AI security solution that helps organizations discover, assess, and govern shadow AI and AI exposure. As the regulatory landscape around AI governance and AI regulations continues to shift, Maro provides security and GRC teams with the continuous observability and NIST AI RMF-aligned policy enforcement needed to build a defensible program today, not just when the rules are finalized. Learn more at seekmaro.com.